The New Gatekeeper of Scientific Integrity

Scientific publishing is not slow because scholars are lazy. It is slow because trust takes time.

Yet submissions are exploding. Journals are drowning in manuscripts. Reviewers are exhausted. Editorial boards are overstretched.

This is exactly where AI in Manuscript Screening and Peer Review is stepping in — not to replace human judgment, but to protect it.

The conversation is no longer hypothetical. Artificial intelligence is already embedded in editorial workflows across leading publishers. The real question isn’t whether AI belongs in peer review. It’s whether we implement it ethically and intelligently.

Why Manuscript Screening Is Under Pressure

Global research output is increasing at an unprecedented rate. According to data summarized on Wikipedia’s overview of peer review, scholarly evaluation remains the backbone of academic credibility — yet the system was designed for a slower era.

Editors today face:

- Plagiarism at scale (Read Understanding Plagiarism in Academic Writing)

- AI-generated manuscripts (Read AI in Manuscript Screening and Peer Review)

- Image manipulation

- Fabricated data

- Reviewer fatigue

- Delays stretching to months

The need for structured, automated triage has become urgent.

At ClinicaPress, we’ve previously discussed publication bottlenecks in our editorial insights such as The Ethics of Clinical Publishing . The pattern is clear: integrity systems must evolve alongside research output.

What AI Actually Does in Manuscript Screening

Let’s be precise.

AI does not “review” research in the intellectual sense. It screens.

Modern AI tools can:

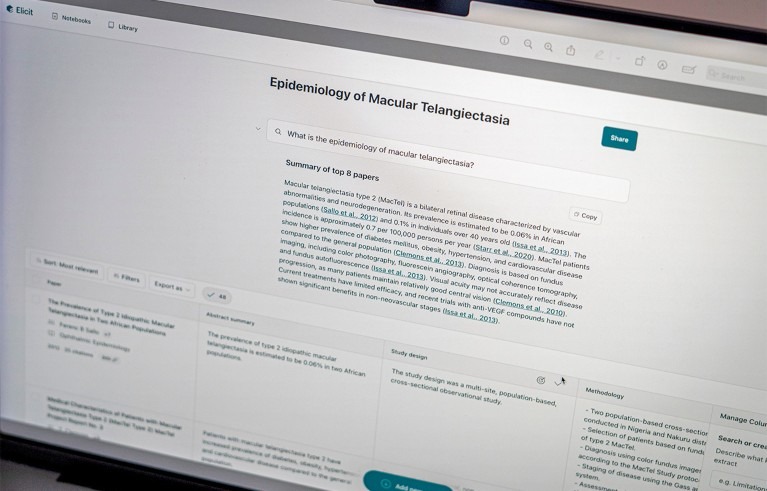

- Detect plagiarism and text recycling

- Flag statistical inconsistencies

- Identify manipulated images

- Spot citation anomalies (Get to know about Altmetrics in Medicine — Measuring Impact Beyond Citations)

- Detect AI-generated writing patterns ( Learn more about AI in Manuscript Screening and Peer Review)

- Assess formatting and reporting guideline compliance

This automation allows editors to focus on scientific merit rather than technical policing.

In clinical sciences, similar systems are already transforming documentation workflows, particularly in ai in medical record review services, where structured data extraction reduces human error while maintaining oversight.

The concept is parallel: machine efficiency, human accountability.

AI in Peer Review: Assistance, Not Replacement

When integrated into peer review, AI typically assists in:

- Suggesting potential reviewers based on expertise mapping

- Identifying conflicts of interest

- Screening for ethical compliance statements

- Evaluating adherence to CONSORT or PRISMA reporting standards

Organizations like the World Health Organization emphasize research transparency and reporting standards in global health publishing. AI systems help enforce these standards consistently.

But here’s the line that cannot be crossed:

AI must never replace expert judgment in evaluating novelty, methodology rigor, or clinical implications.

That responsibility belongs to qualified reviewers — not algorithms.

How to Know If an Article Is Peer Reviewed

AI also influences how readers assess credibility.

A common question among students and early researchers is:

- How to know if an article is peer reviewed

- How to tell if an article is peer reviewed

- How do I know if an article is peer reviewed

- How do you know if an article is peer reviewed

- How to know if something is peer reviewed

- How to tell if something is peer reviewed

Read our guide on Peer-Reviewed Journal Explained: 10 Reasons Why It Matters for Academic Research.

These questions reflect a trust gap.

Here’s a practical framework:

| Indicator | What to Check | Why It Matters |

| Journal Website | States “peer-reviewed” in scope | Transparency in editorial process |

| Editorial Board | Named experts listed | Accountability |

| Indexing | Indexed in PubMed, Scopus, Web of Science | Quality screening |

| DOI Presence | Persistent identifier | Publication traceability |

| Review Timeline | Submission and acceptance dates | Evidence of evaluation |

You can also verify journals through resources like the U.S. National Library of Medicine journal database, which lists indexed biomedical journals.

AI screening tools increasingly help journals maintain these structural signals of credibility.

The Ethical Risks of AI in Peer Review

Automation introduces power — and risk.

Major concerns include:

- Algorithmic bias

- False positives in plagiarism detection

- Over-reliance on automated scoring

- Data privacy concerns

- Lack of transparency in decision logic

As highlighted in investigative reporting by Nature News, AI adoption in publishing must be accompanied by governance frameworks.

Blind trust in AI is just as dangerous as blind trust in authors.

That’s why reputable journals implement:

- Human verification of AI flags

- Clear editorial policies on AI usage

- Disclosure requirements for AI-assisted writing

- Audit trails for algorithmic decisions

AI vs Peer to Peer Review: Complement, Not Competition

Traditional peer to peer review remains the gold standard for scientific evaluation.

Experts critique:

- Study design

- Statistical validity

- Ethical compliance

- Interpretation accuracy

- Relevance to clinical practice

AI cannot replicate contextual expertise developed through years of training.

Instead, AI enhances peer-to-peer review by:

- Reducing administrative burden

- Filtering low-quality submissions early

- Shortening turnaround time

- Providing structured reviewer dashboards

The result? Reviewers spend more time on substance and less on formatting errors.

Efficiency is not the enemy of integrity — negligence is.

The Future: Hybrid Intelligence Models

The most sustainable model is hybrid:

- Stage 1 – AI Triage

- Format checks

- Similarity scans

- Reference validation

- Statistical anomaly alerts

- Stage 2 – Human Evaluation

- Methodological critique

- Ethical appraisal

- Clinical relevance

- Interpretative judgment

- Stage 3 – AI Post-Decision Support

- Language refinement suggestions

- Data visualization checks

- Compliance auditing

This layered approach mirrors responsible digital transformation seen across healthcare systems, including regulatory guidance published by agencies such as the U.S. Food and Drug Administration on AI governance in medical technologies.

If AI requires regulation in clinical devices, it certainly requires oversight in clinical publishing.

What This Means for Authors

Writers can no longer ignore AI screening realities.

Before submission:

- Run plagiarism checks

- Verify statistical calculations

- Ensure reporting guideline adherence

- Disclose AI writing assistance transparently

- Maintain raw data integrity

Because here’s the blunt truth:

If AI detects preventable errors before a human ever reads your work, credibility takes a hit.

Academic publishing is evolving into a precision environment. Sloppiness will not survive automated scrutiny.

Conclusion: AI as Guardian, Not Judge

AI in Manuscript Screening and Peer Review is not a threat to science. Misuse is.

Used responsibly, AI:

- Protects editorial resources

- Strengthens fraud detection

- Improves efficiency

- Supports reviewer capacity

- Reinforces transparency

But it must operate within ethical guardrails, transparent policies, and human supervision.

The future of peer review is not machine-driven.

It is machine-assisted and human-governed.

Integrity remains a human responsibility.

Technology simply sharpens the lens.