A Red Flag for Reviewers

In medical publishing, numbers don’t earn trust on their own—interpretation does. Among all statistical metrics, the p-value is the most frequently misused and the fastest way to trigger reviewer skepticism when reported without context.

Reviewers are not anti-statistics. They are anti–shortcut science. And when they see isolated p-values, especially framed as definitive proof, they read it as a warning sign rather than evidence.

This article breaks down why p-values without context undermine credibility, what reviewers actually expect, and how authors should report results responsibly—without drifting into statistical theater.

Why Standalone P-Values Immediately Raise Reviewer Red Flags

A p-value is a conditional probability, not a conclusion. It only answers one narrow question: How compatible are the data with the null hypothesis under specific assumptions?

When authors report p-values without clarifying:

- the hypothesis,

- the clinical relevance,

- or the analytical assumptions,

reviewers interpret this as methodological thinness, not rigor.

This concern is not subjective. The American Statistical Association has formally warned that p-values do not measure effect size, importance, or truth. Major medical journals now align reviewer guidance with this position, which means context-free statistics rarely pass scrutiny.

In short, p-values without explanation signal that the author expects the number to do the intellectual work.

What a P-Value Actually Means—and What It Never Means

Understanding p value interpretation in medical research starts with boundaries.

A p-value can tell you:

- Whether observed data are unlikely under the null hypothesis

- Whether a result meets a predefined statistical threshold

A p-value cannot tell you:

- Whether the effect is clinically meaningful

- Whether the study is well designed

- Whether results are reproducible

- Whether the hypothesis is true

This confusion is common, especially among early-career researchers entering medical research jobs, where statistical competence is often assumed but rarely trained explicitly.

Reviewers are acutely aware of this gap—and they compensate by demanding more explanation, not more decimals.

The 0.05 Threshold: Convention, Not a Verdict

The obsession with p value less than 0.05 is one of the most persistent myths in medical research.

That cutoff is a historical convention, not a scientific law. Yet manuscripts still frame results as:

- “significant” versus “non-significant”

- “positive” versus “negative”

High-impact journals, including Nature and The BMJ, have openly discouraged this binary thinking. Reviewers now expect authors to explain why a result matters, not just whether it crossed an arbitrary line.

A marginal p-value with strong clinical relevance may be more meaningful than a highly “significant” result with trivial impact. Context decides—not the threshold.

What Reviewers Expect Beyond the P-Value

When reviewers evaluate statistical sections, they look for coherence, not complexity.

They expect authors to report:

- Effect sizes alongside p-values

- Confidence intervals to show uncertainty

- Clear justification for chosen statistical tests

- Clinical or biological interpretation of findings

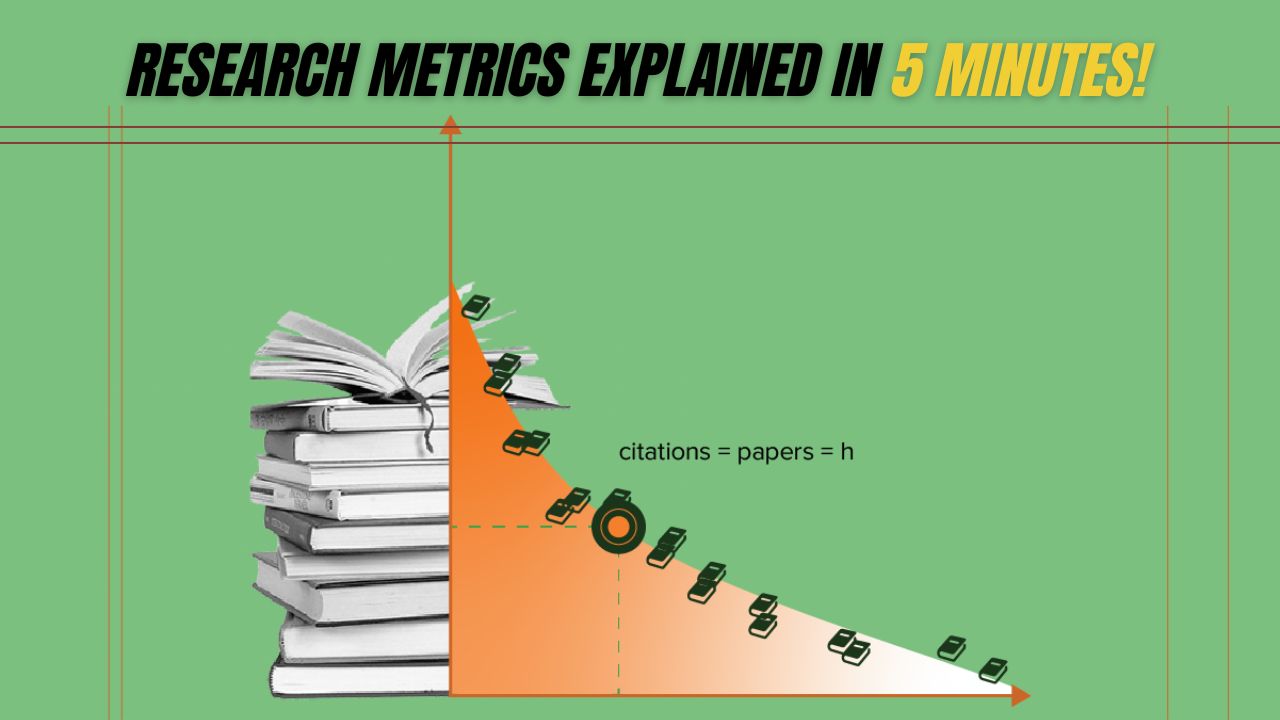

Below is a simplified comparison reviewers implicitly make when assessing manuscripts:

| Reporting Style | Reviewer Interpretation | Editorial Outcome |

| p < 0.05 reported alone | Superficial statistical reporting | Major revision or rejection |

| P-value + effect size | Adequate but incomplete | Revision requested |

| P-value + CI + clinical relevance | Statistically responsible | Favorable review |

| Transparent limitations discussed | High integrity reporting | Strong acceptance potential |

This is why many manuscripts fail not on data quality, but on statistical storytelling.

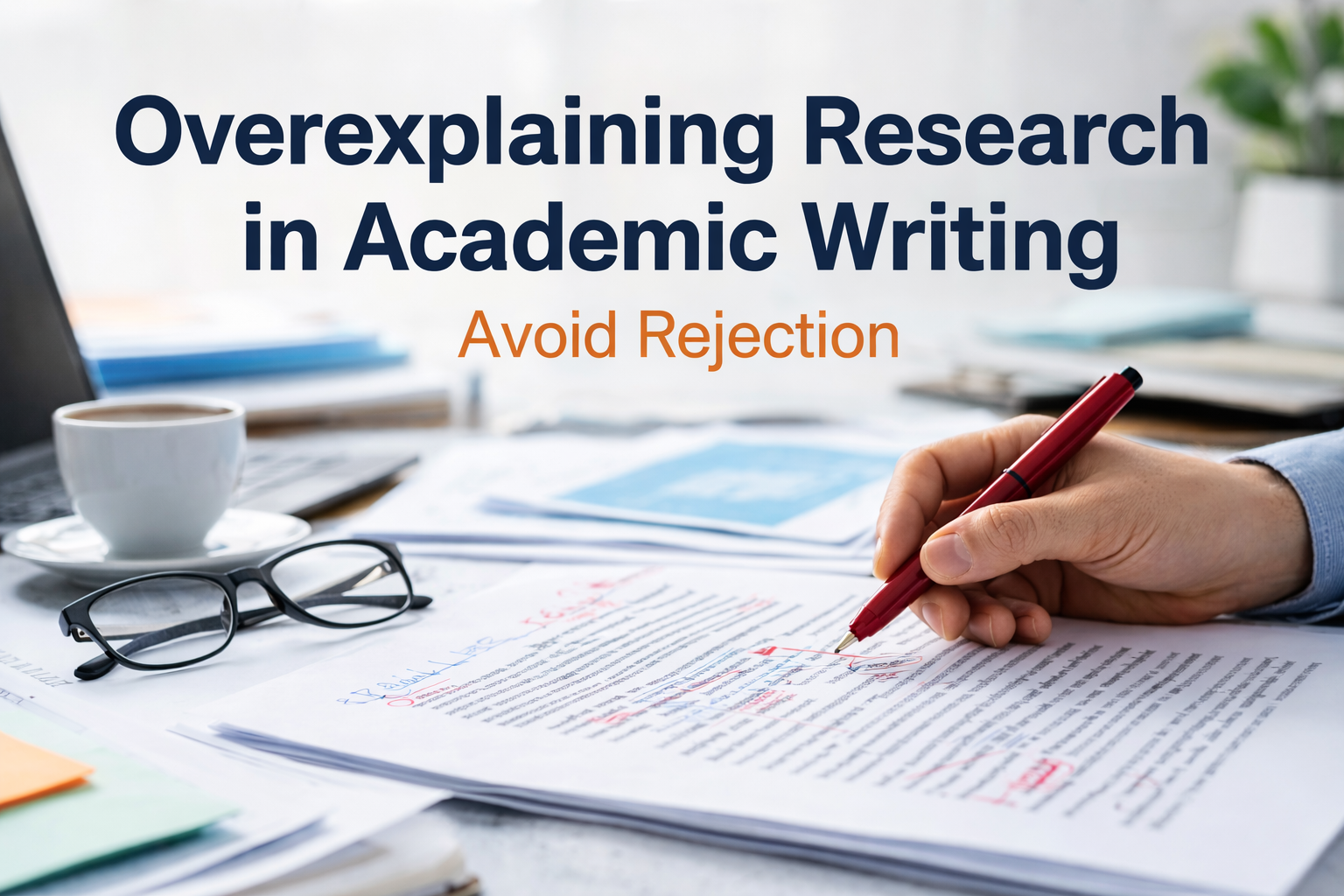

Visualizing the Problem: P-Value With vs Without Context

The difference between acceptable and weak reporting is rarely mathematical—it’s interpretive. Reviewers want to see how results fit into a broader evidence framework, not just numerical outcomes.

Biostatistics Literacy Is No Longer Optional

Modern reviewers expect authors to demonstrate working knowledge of biostatistics p value principles.

That includes understanding:

- Model assumptions

- Data distribution

- Multiple testing corrections

- Pre-specified vs post-hoc analyses

Even practical issues—like how to calculate p value in Excel—become problematic when authors rely on default formulas without understanding their limitations. Excel is a tool, not a statistical authority, and reviewers know the difference.

This shift reflects broader guidance from the World Health Organization, which emphasizes transparency and interpretability in clinical research reporting.

When Reviewers Say “Lacks Statistical Context,” This Is What They Mean

That phrase is not vague—it’s diagnostic.

It usually signals that:

- Results are statistically correct but poorly interpreted

- Clinical relevance is not discussed

- Effect sizes are missing

- Claims exceed the data

At ClinicaPress, we routinely see manuscripts stalled in revision cycles for this exact reason. Authors assume statistical significance equals scientific importance. Reviewers disagree.

Our editorial analysis on common statistical errors in clinical research shows this is one of the top preventable causes of rejection.

Get to know more on Poor Quality Figures in Research Papers: How One Weak Figure Can Sink Your Entire Paper.

Career Implications for Medical Researchers

For researchers competing for grants, promotions, or long-term medical research jobs, statistical credibility compounds over time.

Editors remember authors who consistently:

- Overclaim based on weak statistics

- Rely solely on p-values

- Ignore reviewer feedback on interpretation

This is why ClinicaPress emphasizes statistical-editorial alignment in our guidance on how reviewers evaluate statistical rigor. Getting the math right is necessary—but never sufficient.

Moving Beyond P-Value Minimalism

Responsible reporting doesn’t mean abandoning p-values. It means demoting them from judge to supporting evidence.

Strong manuscripts:

- Use p-values to support—not replace—reasoning

- Pair them with effect sizes and confidence intervals

- Interpret findings in clinical and biological terms

- Acknowledge uncertainty openly

This approach reflects the evolving consensus summarized in Wikipedia’s overview of p-values and echoed across biomedical publishing.

At ClinicaPress, our framework for evidence-based result interpretation is built on one principle: numbers gain meaning only through explanation.

Final Takeaway for Authors and Reviewers

Reviewers are not hostile to p-values. They are hostile to lazy inference.

A p-value without context tells reviewers that the author wants statistics to think on their behalf. That mindset no longer survives peer review.

If you want your work respected—and published—treat p-values as one piece of evidence, not the verdict. Context isn’t optional. It’s scientific integrity.