The Cost of an Unclear Research Narrative

Brilliant data. Solid methodology. Ethical compliance.

And still — rejection.

This is the silent epidemic in academic publishing: the unclear research narrative. Not bad science. Not flawed statistics. Just a story that doesn’t land.

Editors don’t reject quality because they enjoy it. They reject confusion. If your argument is hard to follow, your work becomes hard to trust.

Let’s break down why this happens — and how to prevent it.

The Unclear Research Narrative: What It Really Means

An unclear research narrative isn’t about grammar mistakes. It’s about structural fog.

It happens when:

- The research question isn’t sharply defined

- The hypothesis shifts mid-paper

- Results are presented without interpretive guidance

- The discussion doesn’t circle back to the core objective

Even in advanced research systems, where teams use cutting-edge tools and analytics, clarity is still human work. Machines calculate. Humans persuade.

And academic publishing is persuasion under peer review.

According to the editorial standards outlined by the Committee on Publication Ethics (COPE), transparency and clarity are core pillars of responsible reporting. If reviewers struggle to understand your study’s logic, they question its integrity — even when the data is sound.

That’s the price of narrative failure.

Why Strong Data Isn’t Enough in Primary Research

In primary research, originality is your currency. But originality without clarity becomes noise.

Whether you’re conducting clinical trials, laboratory experiments, or atmospheric and environmental research, your paper must guide readers through:

- What problem exists

- Why it matters

- What you tested

- What you found

- What it changes

Miss one step — and the chain breaks.

In fields like environmental science, where interpretation directly informs policy, clarity is non-negotiable. The U.S. Environmental Protection Agency consistently emphasizes transparent reporting in environmental assessments because ambiguity can distort real-world decisions.

Academic publishing operates the same way. If your logic isn’t airtight, impact evaporates.

The Hidden Gap Between Research and Storytelling

Here’s the uncomfortable truth:

Most researchers are trained to analyze — not to narrate.

There’s a difference between presenting findings and constructing a coherent argument. Think of it like narrative painting: the same elements can either form a masterpiece or a scattered canvas.

A research paper is not a data dump. It is structured reasoning.

When the Introduction doesn’t set up tension, when Methods feel detached from objectives, or when the Discussion reads like a summary instead of an argument, reviewers disengage.

And disengagement equals rejection.

If you’ve read our analysis on editorial workflow breakdowns in peer review at ClinicaPress, you’ll know that clarity consistently ranks among the top rejection triggers.

How Advanced Research Systems Still Produce Confusion

Ironically, researchers working within advanced research systems — large labs, multi-center collaborations, AI-supported analytics — are at higher risk of narrative fragmentation.

Why?

Because:

- Multiple authors dilute voice

- Data teams write results

- Senior authors write discussion

- Research assistants draft methods

Without strong narrative oversight, the manuscript becomes stitched — not written.

Even guidance from institutions like the National Institutes of Health stresses the importance of coherent reporting structures in funded studies. Yet coherence often collapses in multi-author projects.

A research assistant might execute tasks flawlessly, but narrative architecture requires strategic alignment from the corresponding author.

Clarity doesn’t emerge automatically. It’s designed.

What “Clear” Actually Looks Like (The Unclear Antonym in Action)

If “unclear” is confusion, its antonym is not just “clear.” It’s precise, structured, intentional.

Here’s how that translates in practice:

| Section | Unclear Version | Clear Version |

| Introduction | Broad background without focus | Specific gap leading to a defined research question |

| Methods | Technical detail without linkage | Methods directly tied to objectives |

| Results | Raw findings presented sequentially | Findings organized around research questions |

| Discussion | Summary of results | Interpretation, implications, limitations |

Clarity means the reader never asks: Why am I reading this?

The World Health Organization’s guidance on research reporting stresses interpretive transparency in global health publications (WHO guidelines portal). Structure is ethical, not cosmetic.

An unclear research narrative isn’t just inefficient. It borders on irresponsible.

Atmospheric and Environmental Research: A Case Study in Narrative Stakes

In atmospheric and environmental research, clarity is critical because findings influence regulatory decisions, climate policy, and public perception.

A poorly structured paper on air pollution modeling can mislead policymakers.

A scattered climate-impact study can be misinterpreted by media.

Consider how large-scale climate reporting synthesizes findings into cohesive narratives. Even platforms like National Geographic invest heavily in narrative framing when translating environmental data for public understanding.

Academic manuscripts demand the same discipline.

If your atmospheric dataset is powerful but your narrative is fragmented, your work risks invisibility.

The Structural Mistakes That Kill Strong Research

Here’s where most manuscripts fail:

- Objectives that shift between abstract and conclusion

- Overloaded literature reviews without a defined gap

- Results that don’t answer the stated question

- Discussions that introduce new hypotheses

- Weak transitions between sections

You can avoid these by ensuring alignment across all components.

We explored structural integrity in research manuscripts in our editorial breakdown at ClinicaPress, where coherence is treated as a strategic advantage — not an afterthought.

Because structure is persuasion.

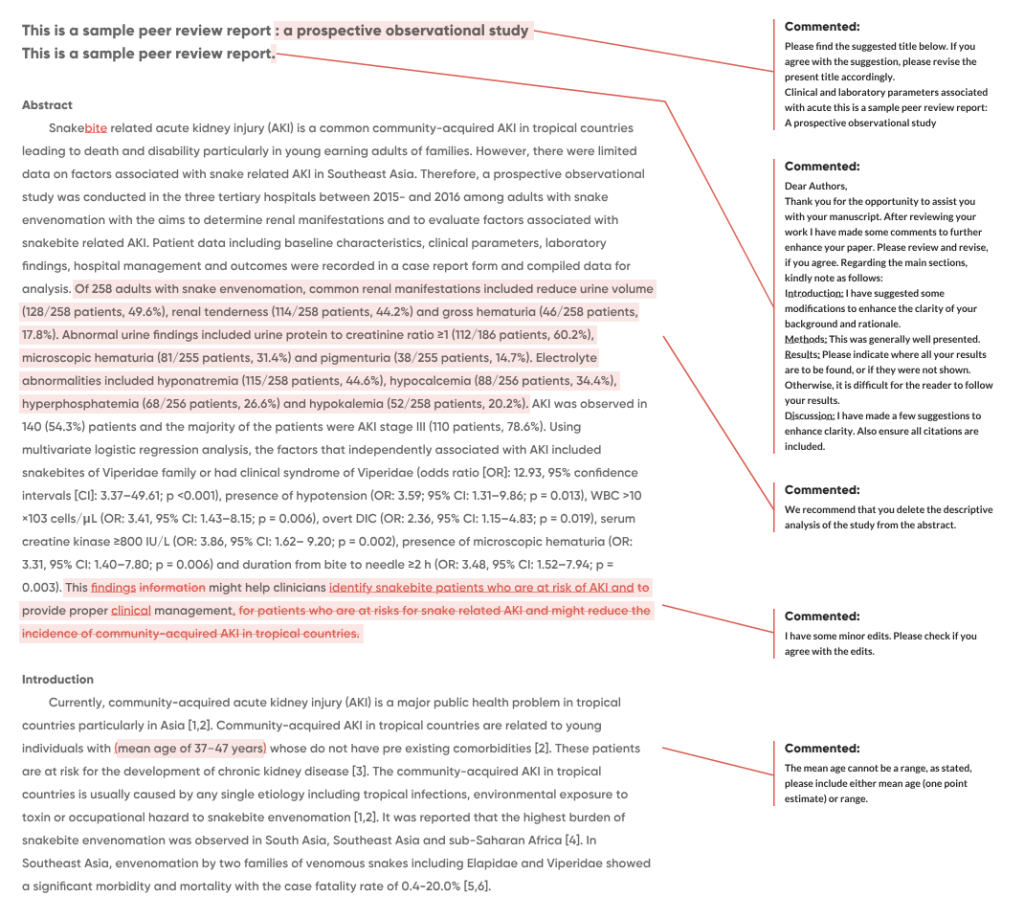

Peer Review Is a Clarity Stress Test

Peer review isn’t about catching typos. It’s about testing logic.

Reviewers ask:

- Is the argument coherent?

- Are conclusions supported?

- Is the research gap justified?

When reviewers struggle to follow your narrative, they assume deeper flaws.

If you’ve seen how inconsistent storytelling affects credibility in scientific publishing, our recent insight at ClinicaPress outlines the pattern clearly: confusion erodes confidence.

Even high-impact journals prioritize readability because clarity signals competence.

Academic Integrity and Narrative Responsibility

Let’s be blunt: clarity is ethical.

Ambiguity allows overinterpretation. Poor structure enables selective emphasis. An unclear research narrative opens the door to misrepresentation — even unintentionally.

Transparent storytelling aligns with publication ethics frameworks such as those promoted by COPE and global health authorities.

Clarity protects:

- Your credibility

- Your institution

- The scientific record

This isn’t about making your paper “sound better.” It’s about making your science defensible.

As we discussed in our analysis of publication transparency at ClinicaPress, editorial precision strengthens research integrity itself.

Final Reality Check

High-quality research does not fail because it lacks value.

It fails because it lacks clarity.

An unclear research narrative makes strong science invisible. In a competitive publishing landscape, invisibility equals irrelevance.

Data builds knowledge. Narrative delivers it.

If your story isn’t clear, your research isn’t reaching its potential.

And in academia, potential doesn’t count — impact does.